The Culture Shift That Broke Tech

This weekend I gave a presentation at the “Don’t Be Evil” conference in Austin, Texas. It was hosted at the FUTO headquarters, where their tagline is “engineering solutions to big tech problems.” The conference was about exploring how to steer the world of technology toward products and services that serve the user, instead of working against the user.

As we likely all know, ‘don’t be evil’ was most widely known as Google’s old slogan. But quite a while ago, Google quietly removed it. That removal is a fitting metonym for the broader culture shift we’ve seen across tech.

Once upon a time, if a company secretly followed you across your digital life, it would have sounded like a dystopian thriller. But now it’s basically a product requirement.

In this newsletter, I want to talk about how tech culture has radically shifted, how that shift has changed the relationship between companies and users, and why so much of the public still does not see what is happening. In particular, I want to show you some egregious examples of this culture in action. Once you see how dangerous and adversarial this approach is, it becomes much clearer why we need to push back.

Spying Used to Be Creepy

The early internet, or even the internet of a decade ago, wasn’t perfect. But there were at least some broadly understood norms. First, that spying is creepy.

Bruce Schneier and Barath Raghavan wrote a great essay about shifting baseline syndrome in the privacy space. They were responding to news that Microsoft was spying on its users, and they observed that many people defended this behavior, even claiming it was ‘unfair’ to call it spying because it was simply ‘part of how cloud services work.’

The purpose of the essay was to step back and understand how this shift had happened. Because to be clear, Microsoft WAS spying on its users. What the authors found striking was that many people no longer saw this as creepy or scandalous, but as ordinary and expected. It was a vivid example of how dramatically our standards for tech companies had changed.

‘Spying is creepy’ is just one example of the culture shift.

Deception was once disreputable.

Violating user expectations was a scandal.

Consent mattered.

If a company or government was caught circumventing privacy protections, they were shamed.

That broader moral baseline was captured by the phrase ‘don’t be evil.’ It reflected a general culture in which engineers were expected to ask not just whether they could do something, but whether they should. The phrase implied restraint and moral consideration.

The New Normal

So what is the NEW normal? A culture where much of the brainpower in the tech sector, both public and private, is being directed toward things that hurt users instead of help them.

User resistance, platform safeguards, and legal boundaries are treated as engineering puzzles to route around.

Engineering culture is rewarded for solving constraints. But there’s often no regard for why those constraints might have been put there in the first place.

At some point over the past decade, constraint-solving became untethered from morals, and the act of bypassing a safeguard became treated as cleverness rather than misconduct.

The problem with that mindset is that safeguards are often there to protect the user and respect their privacy. So when the highest status goes to the people who find ways around them, the technology we rely on stops being designed to serve the user and starts being designed to extract more from them than they knowingly agreed to give.

Users are now seen as terrain to be mined.

Things like browser blocks, OS permissions, cookie rules, consent flows, and sandboxing should function as warning signs that say, ‘keep out.’ But instead of respecting that, much of the tech sector treats these barriers as prompts for ingenuity.

Then these workarounds are whitewashed in the terms of service and privacy policies. They’re buried in vague legalese intended to hide the details from users. And this language also affects the people building this technology: it abstracts away the meaning of what they are doing, making it feel cleaner and more palatable.

“Telemetry.”

“Signal.”

“Optimization.”

“Friction reduction.”

“Identity resolution.”

“Measurement.”

These terms make invasive acts sound neutral. Once you rename spying as measurement, you can quiet your conscience. We have baked the normalization of civil rights violations into the architecture of everyday technology, and then convinced ourselves that it’s nothing to be alarmed about.

Meta: A Case Study

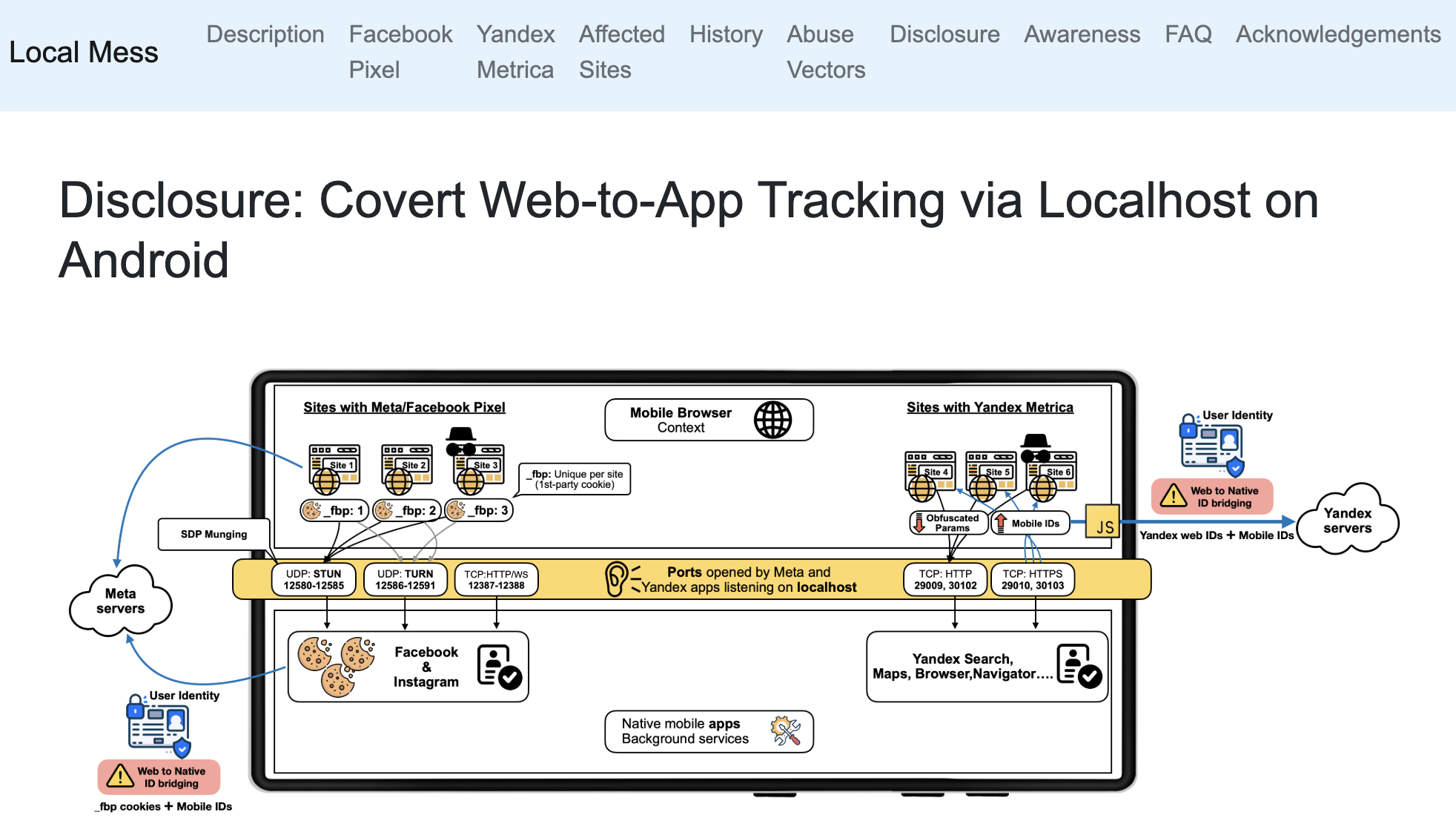

Let’s look at a case study of this kind of culture shift. Researchers found that Meta was using WebRTC on Android to spy on people’s web browsing.

What does this mean?

Normally, WebRTC is a technology intended for live, direct communication. So let’s say you have a browser-based video call. When you click “Join meeting,” WebRTC helps your browser do things like access your camera and microphone, send your audio and video in real time, and receive the other person’s audio and video.

But Meta perverted this intention and instead used this technology to deanonymize people’s browsing.

How?

Well, Meta uses tracking pixels across a large swath of the web. They’re estimated to be embedded in over 5.8 million websites. When someone visits a website with one of these pixels, the browser receives a cookie identifier. In theory, this cookie shouldn’t automatically tell Meta who that person is when visiting that website.

But the Meta Pixel also used WebRTC to send that cookie to the phone’s localhost ports on Android. The Facebook and Instagram apps were listening on those ports, and if the user was logged in to one of those apps, the cookie value was now linked to that logged-in account.

So Meta was able to tell that this signed-in user was the same person browsing that website.

This is a prime example of engineers using incredibly clever tools to achieve their objectives, but also being egregiously unethical. What they were doing clearly bypassed ordinary privacy expectations, such as those that come with app sandboxing, where phones are specifically designed so that one app isn’t meant to be able to spy on the activities of another app.

This is really adversarial to the user, because it treats the user’s attempt to create boundaries as a challenge to defeat. And this is an all-too-common pattern in tech culture today.

The Gap

All of this happens without the user being aware, which means there is a huge gap between what users expect and how the technology they use actually behaves. The gap points to a culture that too often treats user understanding as a threat rather than a goal.

People today still think they are getting something like siloed data collection that only operates within carefully scoped permissions and guardrails. The average person believes things like incognito mode gives them privacy, app permissions are robust safeguards, clearing cookies resets your identity, if they didn’t click yes then data probably wasn’t collected, and my favorite: that if there were actual egregious privacy violations going on, regulators or journalists would have stopped them.

But the reality is that a new culture has emerged without users realizing it, where companies are using opaque, cross-context, technically ingenious methods to identify, infer, and manipulate.

Identifiers are stitched together across contexts, with all kinds of different actors contributing to the surveillance supply chain: apps, pixels, SDKs, APIs, ad networks, analytics firms, and data brokers. These things are all tied together by entities that make a tremendous amount of money from aggregating data sets, and some of the largest clients of this data are governments.

And as far as Chrome’s incognito mode is concerned, we all know how that ends.

Then there are app permissions: people tend to think these are a complete privacy control, when really they are only one layer, and companies usually find ways to learn far more than users think those permission screens allow.

Most of the surveillance going on in our day-to-day lives operates completely outside the average person’s comprehension.

The gap between perception and reality is part of the scandal. Companies would not work so hard to hide how this surveillance works if they thought users would be comfortable with it.

And the fact that they do it anyway is deeply unethical.

Why This Is So Dangerous

Now why does all of this matter?

Most people think this is about ads. Phrases like “surveillance capitalism” make people think this is just about companies trying to sell us a better pair of shoes.

That data can absolutely be used to sell us a better pair of shoes. But the same data can be used to infer our thoughts, habits, and political persuasion.

The same infrastructure of surveillance that identifies consumers can identify potential dissidents who might become part of a protest movement or opposition party, and stop that political opposition before it even forms.

The same real-time bidding systems that vie for our attention can be used to influence us not just in consumer choices, but in our political beliefs. It can shape our reality. It can get us to hate certain groups of people because we’re made to believe that they hate us.

When companies normalize invasive surveillance, they also normalize the infrastructure that governments, litigants, bad insiders, and future authoritarian actors can exploit.

Privacy versus surveillance is actually a battle between:

Freedom of thought and manipulation.

Freedom of association and censorship.

Human dignity and reputational vulnerability.

Liberty and the chilling effects that happen when populations start to realize that everything they say or do is monitored.

The erosion of privacy is about the quiet erosion of civil rights.

The most dangerous trick surveillance ever pulled was not hiding itself.

It was teaching us to call it normal.

How We Got Here

All of this happened through many different mechanisms.

First of all, this surveillance happened gradually.

When we all first signed up to get a free Gmail account, none of us were told that this would be used to mine our most personal communications to learn about us, and that this data would eventually end up in the hands of countless third parties as it passed from one company to another through a vast ecosystem of data brokers.

At worst, we might have assumed Google was just selling keyword ads in its search engine. What crept in instead, largely without users noticing, was one of the most sophisticated surveillance systems ever built. And it wasn’t confined to just Google. It spread into the very infrastructure of the internet and became embedded in nearly every tool we use.

No one was told that when they bought an Android or iPhone and had their Wi-Fi on, they were essentially wardriving for Google and Apple, helping build a robust database of every SSID in existence that could be used to monitor people’s exact locations as they moved around.

The harms of these systems were distributed and often invisible, and that’s why no one really looked too closely or pushed back too much.

Because at the individual level, people asked “what do I have to hide?”

But at a societal level, this is a comprehensive infrastructure of surveillance that governments have basically total access to, that can be fine-tuned to target anyone, and that has become one of the most potent mechanisms for control we’ve ever seen.

On top of this, the gap between user understanding and the technical reality of these systems was so great that privacy advocates were caricatured as paranoid. “Things couldn’t possibly be as bad as they say. Surely we’d know about it.”

And inside some of the worst offenders, every new violation reset the internal baseline of what was acceptable. Over time, people kept asking “can we” but stopped asking “should we.” Surveillance stopped being a scandal and became a business model. A norm.

This baseline of acceptable surveillance has shifted entirely to a level that would have been unacceptable ten or twenty years ago. Those who evolved alongside it became frogs in boiling water, and the younger generations who simply inherited these degraded standards were told that “this is just how tech works.”

Today, surveillance has exploded because users are conditioned, language is sanitized behind vague legalese, and harms are hidden behind technical abstraction.

The “Don’t Be Evil” Test

So what now? Is it possible to reclaim this ground that’s been lost?

I think we can. But we have to be strong enough to demand higher standards.

I propose the “don’t be evil” test.

What does this test look like?

If a system relies on deception, it fails the test.

If a user would be shocked to learn what is happening in the tools they use, it fails the test.

If a company is circumventing privacy safeguards, it fails the test.

If meaningful consent requires a PhD to understand it, it fails the test.

If data from one context is silently merged with another without users understanding what is going on, it fails the test.

If engineers are rewarded for bypassing protections, the company or government agency fails the test.

We Can Raise the Standard Again

Tech has this incredible, almost utopian promise.

But that future doesn’t happen by autopilot. We have to build it.

Tech itself is neutral and can be used for good or bad. So much tech today has been hijacked for surveillance and control, but it doesn’t have to be.

But we have to feed a future where tech works for the user instead of against them, and helps people instead of quietly herding them into systems of control that are easy to abuse.

We must build technology and participate in ecosystems that help build robust protections for individual freedom, human agency, autonomy, and flourishing.

We need to start enforcing higher standards, and stop excusing the toxic culture that has overtaken so much of the tech world.

We must stop building a world where technical ingenuity is praised even when it is used against the people it claims to serve. Where the smartest people in the room are rewarded for finding ever more clever ways to violate us.

We can build a better world.

We can raise the standard again.

We can make spying creepy again.

We can make “don’t be evil” mean something again.”

Yours In Privacy,

Naomi

Consider supporting our nonprofit so that we can fund more research into the surveillance baked into our everyday tech. We want to educate as many people as possible about what’s going on, and help write a better future. Visit LudlowInstitute.org/donate to set up a monthly, tax-deductible donation.

NBTV. Because Privacy Matters.